CS107

CS107 is the third course in Stanford’s introductory programming sequence. The CS106 courses provide you with a solid foundation in programming methodology and abstractions, and CS107 follows on this to build up and expand your breadth and depth of programming experience and techniques. The course will work from the C programming language down to the microprocessor to de-mystify the machine. With a complete understanding of how computer systems execute programs and manipulate data, you will become a more effective programmer, especially in dealing with issues of debugging, performance, portability, and robustness. Topics covered include: the C programming language, data representation, machine-level code, computer arithmetic, elements of code compilation, optimization of memory and runtime performance, and memory organization and management.

The class has two lectures a week and a weekly lab designed for hands-on learning and experimentation. There will be significant programming assignments and you can expect to work hard and be challenged by this course. Your effort can really pay off - once you master the machine and advance your programming skills to the next level, you will have powerful mojo to bring to any future project!

Notes

The best course I have learned about C programming language.

Some readings are copied from COMP201

What is Unix?

Unix: a set of standards and tools commonly used in software development.

- Macs are built on top of Unix

- Linux is built on top of Unix

Every Unix system works with the same tools and commands

What is the Command Line?

The command-line is a text-based interface to navigate a computer, instead of a Graphical User Interface (GUI).

The C Language

C was created around 1970 to make writing Unix and Unix tools easier.

C doesn’t have

- More advanced features like operator overloading, default arguments, pass by reference, classes and objects, ADTs, etc.

- Extensive libraries (no graphics, networking, etc.) – this means not much to learn C!

- many compiler and runtime checks (this may cause security vulnerabilities!)

C is procedural: you write functions, rather than define new variable types with classes and call methods on objects. C is small, fast and efficient.

/*

* hello.c

* This program prints a welcome message

* to the user.

*/

#include <stdio.h> // for printf

int main(int argc, char *argv[]) {

printf("Hello, world!\n");

return 0;

}

Import statements: C libraries are written with angle brackets. Local libraries have quotes: #include “lib.h”

Main function – entry point for the program Should always return an integer (0 = success)

Main parameters – main takes two parameters, both relating to the command line arguments used to execute the program. argc is the number of arguments in argv

argv is an array of arguments (char * is C string)

Boolean Variables

To make Boolean variables, (e.g. bool b = ____), you must import stdbool.h:

#include <stdbool.h> // for bool

ccc

Readings

The Strange Birth and Long Life of Unix “A damn stupid thing to do”—the origins of C

Number Representations

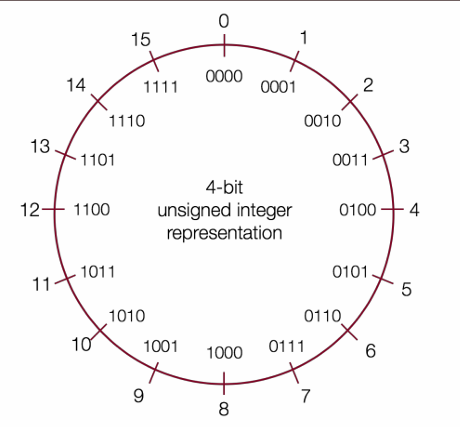

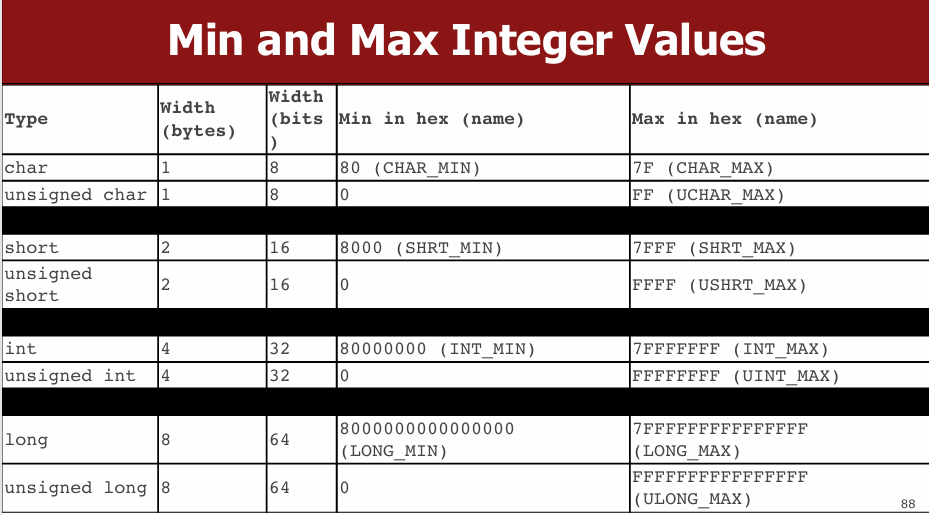

Signed Integers

We’ve only represented 15 of our 16 available numbers!

| Decimal | Positive | Negative |

|---|---|---|

| 0 | 0000 | 0000 |

| 1 | 0001 | 1111 |

| 2 | 0010 | 1110 |

| 3 | 0011 | 1101 |

| 4 | 0100 | 1100 |

| 5 | 0101 | 1011 |

| 6 | 0110 | 1010 |

| 7 | 0111 | 1001 |

| 8 | 1000 | 1000 |

| 9 | 1001(same as -7!) | NA |

| 10 | 1010(same as -6!) | NA |

| 11 | 1011(same as -5!) | NA |

| 12 | 1100(same as -4!) | NA |

| 13 | 1101(same as -3!) | NA |

| 14 | 1110(same as -2!) | NA |

| 15 | 1111(same as -1!) | NA |

two’s complement

Overflow and Underflow

If you exceed the maximum value of your bit representation, you wrap around or overflow back to the smallest bit representation.

If you go below the minimum value of your bit representation, you wrap around or underflow back to the largest bit representation.

Casting

What happens at the byte level when we cast between variable types? The bytes remain the same! This means they may be interpreted differently depending on the type.

C will implicitly cast the signed argument to unsigned, and then performs the operation assuming both numbers are non-negative.

Truncating Bit Representation

If we want to reduce the bit size of a number, C truncates the representation and discards the more significant bits.

unsigned int x = 128000;

unsigned short sx = x;

unsigned int y = sx;

What happens here? Let’s look at the bits in x (a 32-bit unsigned int), 128000:

0000 0000 0000 0001 1111 0100 0000 0000

When we cast x to a short, it only has 16-bits, and C truncates the number:

1111 0100 0000 0000

This is 62464! Unsigned numbers can lose info too. Here is what y looks like:

0000 0000 0000 0000 1111 0100 0000 0000// still 62464

readings

Map 256 Glitch To keep a Boeing Dreamliner flying, reboot once every 248 days Comair/Delta airline debacle caused by the overflow of 16-bit pointer Why Gandhi Is Such An Asshole In Civilization CVE-2019-3857

printf and Integers

As long as the value is a 32-bit type, printf will treat it according to the placeholder!

Expanding Bit Representations

We might not be able to convert from a bigger data type to a smaller data type, but we do want to always be able to convert from a smaller data type to a bigger data type. For unsigned values, we can add leading zeros to the representation (“zero extension”) For signed values, we can repeat the sign of the value for new digits (“sign extension”)

Bitwise Operators

You’re already familiar with many operators in C:

- Arithmetic operators: +, -, *, /, %

- Comparison operators: ==, !=, <, >, <=, >=

- Logical Operators: &&, ||, !

char

A char is a variable type that represents a single character or “glyph”.

Under the hood, C represents each char as an integer (its “ASCII value”).

Lowercase letters are 32 more than their uppercase equivalents (bit flip!)

char uppercaseA = 'A';// Actually 65

char lowercaseA = 'a';// Actually 97

char zeroDigit = '0’;// Actually 48

// prints out every lowercase character

for (char ch = 'a'; ch <= 'z'; ch++) {

printf("%c", ch);

}

Common ctype.h Functions

| Function | Description |

|---|---|

| isalpha(ch) | true if chis ’a’through ‘z’ or ’A’through ‘Z’ |

| islower(ch) | true if chis ’a’through ‘z’ |

| isupper(ch) | true if chis ’A’through ‘Z’ |

| isspace(ch) | true if chis a space, tab, new line, etc. |

| isdigit(ch) | true if chis ’0’through ‘9’ |

| toupper(ch) | returns uppercase equivalent of a letter |

| tolower(ch) | returns lowercase equivalent of a letter |

Remember: these returnthe new char, they cannot modify an existing char!

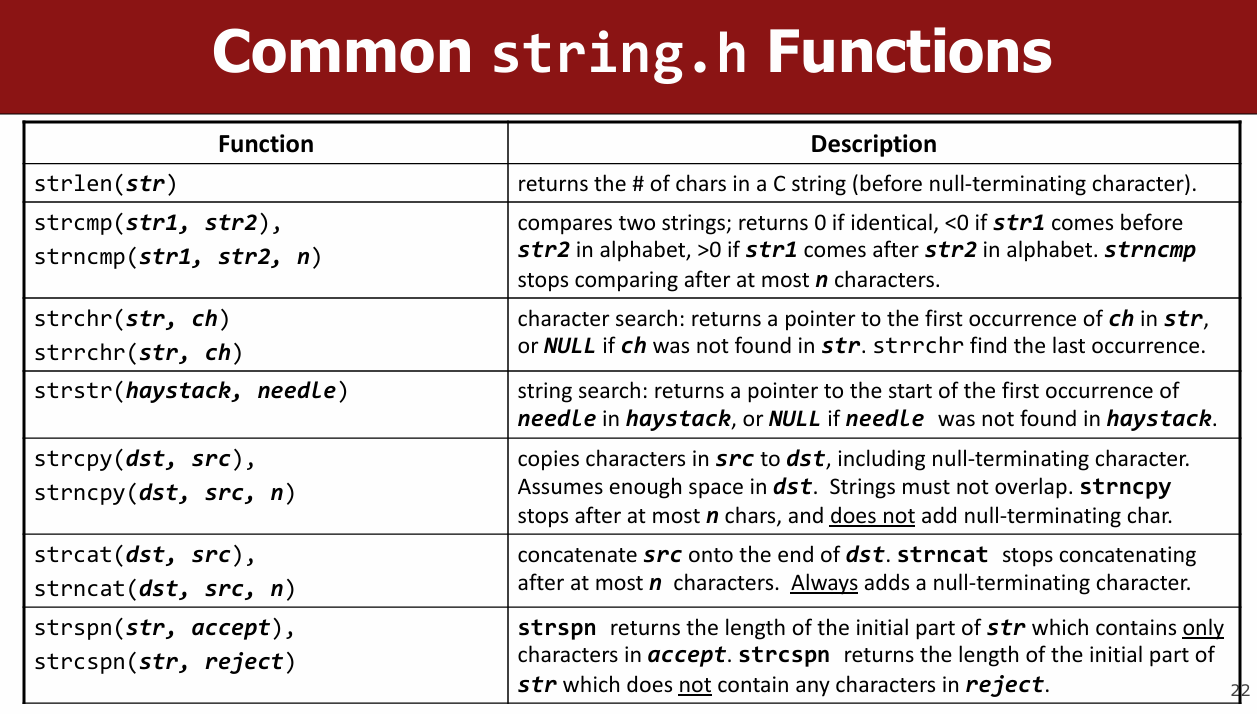

String Length

terminate every string with a ‘\0’ character.

Caution: strlen is O(N) because it must scan the entire string!

Save the value if you plan to refer to the length later.

C Strings As Parameters

When you pass a string as a parameter, it is passed as a char *. C passes the location of the first character rather than a copy of the whole array.

Common string.h Functions

Arrays of Strings

You can make an array of strings to group multiple strings together:

char *stringArray[5]; // space to store 5 char *s

You can also use the following shorthand to initialize a string array:

char *stringArray[] = {

"my string 1",

"my string 2",

"my string 3"

};

Pointers

- A pointer is a variable that stores a memory address.

- Because there is no pass-by-reference in C like in C++, pointers let us pass around the address of one instance of memory, instead of making many copies.

- One (8 byte) pointer can refer to any size memory location!

Character Arrays

When you declare an array of characters, contiguous memory is allocated on the stack to store the contents of the entire array.

An array variable refers to an entire block of memory. You cannot reassign an existing array to be equal to a new array.

char str[6] = "apple";

char str2[8] = "apple 2";

str = str2; // not allowed!

An array’s size cannot be changed once you create it; you must create another new array instead.

char *

When you declare a char pointer equal to a string

literal, the characters are not stored on the stack.

Instead, they are stored in a special area of

memory called the “data segment”. You cannot

modify memory in this segment.

sizeof

A char array is not a pointer; it refers to the entire array contents. In fact, sizeof returns the size of the entire array!

Pointer Arithmetic

When you do pointer arithmetic (with either a pointer or an array), you are adjusting the pointer by a certain number of places (e.g. characters).

Strings as Parameters

When you pass a char * string as a parameter, C makes a copy of the address stored in the char *, and passes it to the function. This means they both refer to the same memory location.

When you pass a char array as a parameter, C makes a copy of the address of the first array

Arrays of Strings

You can make an array of strings to group multiple strings together:

char *stringArray[5]; // space to store 5 char *s

Here’s an ASCII diagram to illustrate the memory layout:

stringArray: [ ptr1 ] [ ptr2 ] [ ptr3 ] [ ptr4 ] [ ptr5 ]

↓ ↓ ↓ ↓ ↓

NULL NULL NULL NULL NULL

You can also use the following shorthand to initialize a string array:

char *stringArray[] = {

"my string 1",

"my string 2",

"my string 3"

};

stringArray: [ ptr1 ] [ ptr2 ] [ ptr3 ]

↓ ↓ ↓

"my string 1" "my string 2" "my string 3"

Pointers

- A pointer is a variable that stores a memory address.

- Because there is no pass-by-reference in C like in C++, pointers let us pass around the address of one instance of memory, instead of making many copies.

Here’s an example of a pointer pointing to an integer:

int x = 42;

int *ptr = &x;

x: [ 42 ]

ptr: [ &x ] → [ 42 ]

- One (8 byte) pointer can refer to any size memory location!

Character Arrays

When you declare an array of characters, contiguous memory is allocated on the stack to store the contents of the entire array.

char str[6] = "apple";

str: [ 'a' ] [ 'p' ] [ 'p' ] [ 'l' ] [ 'e' ] [ '\0' ]

An array variable refers to an entire block of memory. You cannot reassign an existing array to be equal to a new array.

char str[6] = "apple";

char str2[8] = "apple 2";

str = str2; // not allowed!

An array’s size cannot be changed once you create it; you must create another new array instead.

char *

When you declare a char pointer equal to a string literal, the characters are not stored on the stack. Instead, they are stored in a special area of memory called the “data segment”. You cannot modify memory in this segment.

char *str = "apple";

str: [ ptr ] → "apple" (in data segment)

sizeof

A char array is not a pointer; it refers to the entire array contents. In fact, sizeof returns the size of the entire array!

char str[6] = "apple";

printf("%zu\n", sizeof(str)); // Outputs 6

str: [ 'a' ] [ 'p' ] [ 'p' ] [ 'l' ] [ 'e' ] [ '\0' ]

sizeof(str): 6

Pointer Arithmetic

When you do pointer arithmetic (with either a pointer or an array), you are adjusting the pointer by a certain number of places (e.g., characters).

char str[] = "hello";

char *ptr = str;

ptr += 2;

str: [ 'h' ] [ 'e' ] [ 'l' ] [ 'l' ] [ 'o' ] [ '\0' ]

ptr: ↑

Strings as Parameters

When you pass a char * string as a parameter, C makes a copy of the address stored in the char *, and passes it to the function. This means they both refer to the same memory location.

void printString(char *str) {

printf("%s\n", str);

}

str (in function): [ ptr ] → original string

When you pass a char array as a parameter, C makes a copy of the address of the first array element, and passes it (as a char *) to the function.

void printString(char str[]) {

printf("%s\n", str);

}

str (in function): [ ptr ] → original array

Strings and Memory

These memory behaviors explain why strings behave the way they do:

- If we make a variable to store a string literal that is a char[], we can modify the characters because its memory lives in our stack space.

- If we make a variable to store a string literal that is a char *, we cannot modify the characters because its memory lives in the data segment.

- We can set a char*equal to another value, because it is just a pointer.

- We cannot set a char[] equal to another value, because it is not a pointer; it refers to the block of memory reserved for the original array.

- If we change characters in a string passed to a function, these changes will persist outside of the function.

- When we pass a char array as a parameter, we can no longer use sizeof to get its full size.

C Parameters

If you are performing an operation with some input and do not care about any changes to the input, pass the data type itself.

If you are modifying a specific instance of some value, pass the location of what you would like to modify.

STACK

Address Value

-------- ------

0x1f0 3 <- x

0x10 0x1f0 <- intPtr (pointer to x)

Why Use Pointers?

Enables pass-by-reference style (not native in C).

Efficient for memory manipulation.

Required for working with dynamically allocated memory.

When to Pass by Value vs. Pointer

| Intent | How to Pass | Example |

|---|---|---|

| Read-only access | Value | int x |

| Modify caller’s variable | Pointer | int *x |

void doubleNum(int *x) {

*x = *x * 2;

}

int main() {

int num = 2;

doubleNum(&num);

printf("%d", num); // Prints 4

}

Arrays and Memory

Arrays Are Contiguous Memory Blocks

char str[] = "apple"; // 'a' 'p' 'p' 'l' 'e' '\0'

Array Behavior

- Cannot reassign array: nums = nums2; is illegal.

- sizeof(array) gives full size only inside the same scope

- Array Passed to Function Becomes Pointer

void myFunc(char *str) {

// sizeof(str) == 8, just a pointer

}

example:

char str[] = "apple";

Address Value

-------- ------

0x100 'a'

0x101 'p'

0x102 'p'

0x103 'l'

0x104 'e'

0x105 '\0'

Pointer Arithmetic Visualization

char *str = "apple"; // stored in .data segment

str + 1 --> points to "pple"

str + 3 --> points to "le"

DATA SEGMENT

Address Value

-------- ------

0xff0 'a' <- str

0xff1 'p'

0xff2 'p'

0xff3 'l'

0xff4 'e'

0xff5 '\0'

Arrays of Pointers

Used to group multiple strings (e.g., argv in main(int argc, char *argv[])).

Pointer Arithmetic

Pointer arithmetic depends on the type of the pointer.

char *str = "apple";

char *str3 = str + 3;

printf("%s", str3); // Outputs "le"

int nums[] = {52, 23, 12, 34};

int *nums3 = nums + 3;

printf("%d", *nums3); // 34

str[2] == *(str + 2)

// Pointer Difference Gives Element Offset, Not Bytes

int diff = nums3 - nums; // 3, not bytes!

const Keyword

Use const to declare global constants in your program. This indicates the variable cannot change after being created.

Sometimes we use const with pointer parameters to indicate that the function will not / should not change what it points to. The actual pointer can be changed, however.

// This function promises to not change str’s characters

int countUppercase(const char *str) {

int count = 0;

for (int i = 0; i < strlen(str); i++) {

if (isupper(str[i])) {

count++;

}

}

return count;

}

By definition, C gets upset when you set a non-const pointer equal to a const pointer. You need to be consistent with const to reflect what you cannot modify.

Structs

Basic Struct

struct date {

int month;

int day;

};

With typedef

typedef struct {

int month;

int day;

} date;

date today = {1, 28};

Passing Structs

- By value: copies the entire struct.

- By pointer: modifies the original.

Arrays of Structs

typedef struct {

int x;

char c;

} my_struct;

my_struct array_of_structs[5];

array_of_structs[0] = (my_struct){0, 'A'};

Ternary Operator

A shorthand conditional expression.

int x = (argc > 1) ? 50 : 0;

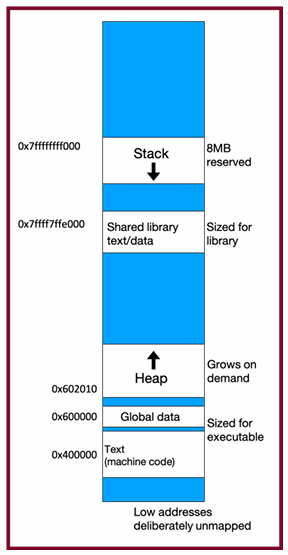

Memory Layout

- The stack is the place where all local variables and parameters live for each function. A function’s stack “frame” goes away when the function returns.

- The stack grows downwards when a new function is

called, and shrinks upwards when the function is

finished

The Stack

- Used for function call frames, parameters, local variables

- LIFO (last-in-first-out): frames are pushed/popped on call/return

Interesting fact: C does not clear out memory when a function’s frame is removed. Instead, it just marks that memory as usable for the next function call. This is more efficient!

A stack overflow is when you use up all stack memory. E.g. a recursive call with too many function calls.

Heap Memory

We need a way to have memory that doesn’t get cleaned up when a function exits.

- Heap memory persists after function return

- You must manually free it

use malloc

void *malloc(size_t size);

void *means a pointer to generic memory. You can set another pointer equal to it without any casting.

Function Example

char *create_string(char ch, int n) {

char *new_str = malloc((sizeof(char) * (n + 1));

for (int i = 0; i < n; i++) {

new_str[i] = ch;

}

new_str[n] = '\0';

return new_str;

}

Memory Management Helpers

strdup

char *str = strdup("Hello");

// Allocates and copies string to heap

str[0] = 'h'; // OK

calloc

int *arr = calloc(5, sizeof(int)); // All elements zero-initialized

- malloc returns a pointer to a certain number of allocated bytes. It doesn’t

know or care whether it will be used as an array, a single block of memory, etc.

It just allocates and returns bytes for you. - If an allocation error occurs (e.g. out of heap memory!), malloc will return NULL. This is an important case to check, for robustness.

free() and Memory Leaks

Proper Memory Cleanup

char *bytes = malloc(4);

...

free(bytes); // Free once only!

Common Mistakes

Freeing same memory twice:

char *a = malloc(4);

char *b = a;

free(a);

free(b); // ERROR

Freeing offset address:

free(bytes + 1); // INVALID

realloc

Resizing Memory

char *str = strdup("Hello");

char *add = " world!";

str = realloc(str, strlen(str) + strlen(add) + 1);

strcat(str, add); // Now str = "Hello world!"

free(str);

- If successful, may move to a new location

- Old memory is automatically freed

- Only valid on pointers returned by malloc, calloc, strdup, realloc

Debugging Strategy

- Observe the bug

- Minimize input

- Narrow search space

- Use GDB and visualizations

- Experiment

- Fix and verify

Memory Leaks

- A memory leak occurs when you lose all references to heap-allocated memory.

- Doesn’t crash program—but consumes RAM.

- Tools like Valgrind can detect leaks.

- Don’t worry during prototyping; fix before submission.

Stack vs. Heap: Summary

| Feature | Stack | Heap |

|---|---|---|

| Lifetime | Automatic (function end) | Manual (malloc + free) |

| Speed | Fast | Slower |

| Size | Limited (~8MB) | Large (until system runs out) |

| Resizable? | No | Yes (realloc) |

| Safety | Compiler type-checks | You manage it |

Generics

Goal: To write C code that operates on any data type, reducing code duplication and improving reusability. Generic functions in C are used for various purposes like sorting, searching, and memory management.

The Need for Generic Swap

The lecture uses the example of a swap function to illustrate the need for generics.

Initially, type-specific swap functions are created (swap_int, swap_short, swap_string).

This leads to code duplication, as the logic for swapping is the same regardless of the data type.

void swap_int(int *a, int *b) {

int temp = *a;

*a = *b;

*b = temp;

}

void swap_short(short *a, short *b) {

short temp = *a;

*a = *b;

*b = temp;

}

void swap_string(char **a, char **b) {

char *temp = *a;

*a = *b;

*b = temp;

}

Generic Swap Implementation

The solution is to use void * to create a generic swap function.

void * is a pointer to an unknown data type, allowing the function to accept pointers to any type.

The generic swap function takes the addresses of the data to be swapped and the size of the data in bytes as arguments.

void swap(void *data1ptr, void *data2ptr, size_t nbytes) {

char temp[nbytes];

memcpy(temp, data1ptr, nbytes);

memcpy(data1ptr, data2ptr, nbytes);

memcpy(data2ptr, temp, nbytes);

}

temp[nbytes] creates a temporary buffer to hold the data.

memcpy is used to copy the data between the addresses and the temporary buffer because we can’t dereference a void * directly.

memcpy and memmove

memcpy: Copies n bytes from src to dest. It assumes no overlap between the source and destination regions.

void *memcpy(void *dest, const void *src, size_t n);

int x = 5;

int y = 4;

memcpy(&x, &y, sizeof(x)); // x becomes 4

memmove: Similar to memcpy but handles overlapping regions correctly.

void *memmove(void *dest, const void *src, size_t n);

Generics Pitfalls

Type Casting: void * needs to be cast to a specific type before dereferencing

Swap Ends

void swap_ends(void *arr, size_t nelems, size_t elem_bytes) {

swap(arr, (char *)arr + (nelems– 1) * elem_bytes, elem_bytes);

}

You’re asked to write a function that swaps the first and last elements in an array of numbers. Well, now it can swap for an array of anything!

Function Pointers

Void * Pitfalls

- void *s are powerful, but dangerous - C cannot do as much checking!

- E.g. with int, C would never let you swap half of an int. With void *s, this can happen!

int x = 0xffffffff;

int y = 0xeeeeeeee;

swap(&x, &y, sizeof(short));

// now x = 0xffffeeee, y = 0xeeeeffff!

printf("x = 0x%x, y = 0x%x\n", x, y);

Stack Structs

Each node can no longer store the data itself, because it could be any size!

Instead, it stores a pointer to the data somewhere else.

typedef struct node {

struct node *next;

void *data;

} node;

typedef struct stack {

int nelems;

int elem_size_bytes;

node *top;

} stack;

stack *stack_create(int elem_size_bytes) {

stack *s = malloc(sizeof(stack));

s->nelems = 0;

s->top = NULL;

s->elem_size_bytes = elem_size_bytes;

return s;

}

void stack_push(stack *s, const void *data) {

node *new_node = malloc(sizeof(node));

new_node->data = malloc(s->elem_size_bytes);

memcpy(new_node->data, data, s->elem_size_bytes);

new_node->next = s->top;

s->top = new_node;

s->nelems++;

}

void stack_pop(stack *s, void *addr) {

if (s->nelems == 0) {

error(1, 0, "Cannot pop from empty stack");

}

node *n = s->top;

memcpy(addr, n->data, s->elem_size_bytes);

s->top = n->next;

free(n->data);

free(n);

s->nelems--;

}

Function Pointers

Definition: A function pointer is a variable that stores the address of a function. This allows functions to be passed as arguments to other functions.

Syntax: The syntax for declaring a function pointer includes the return type and parameter types of the function it can point to.

return_type (*function_pointer_name)(parameter_types);

Generic Bubble Sort

// Comparison function for integers

int int_compare(const void *a, const void *b) {

return *(int*)a - *(int*)b;

}

void bubble_sort(void *arr, int n, int elem_size_bytes, int (*compare_fn)(const void *, const void *)) {

for (int i = 0; i < n - 1; i++) {

for (int j = 0; j < n - i - 1; j++) {

void *a = (char *)arr + j * elem_size_bytes;

void *b = (char *)arr + (j + 1) * elem_size_bytes;

if (compare_fn(a, b) > 0) {

// Swap elements

char temp[elem_size_bytes];

memcpy(temp, a, elem_size_bytes);

memcpy(a, b, elem_size_bytes);

memcpy(b, temp, elem_size_bytes);

}

}

}

}

int main() {

int nums[] = {5, 2, 9, 1, 5, 6};

int n = sizeof(nums) / sizeof(nums[0]);

bubble_sort(nums, n, sizeof(int), int_compare);

for (int i = 0; i < n; i++) {

printf("%d ", nums[i]); // Output: 1 2 5 5 6 9

}

printf("\n");

return 0;

}

Generic C Standard Library Functions

- qsort– I can sort an array of any type! To do that, I need you to provide me a function that can compare two elements of the kind you are asking me to sort.

- bsearch– I can use binary search to search for a key in an array of any type! To do that, I need you to provide me a function that can compare two elements of the kind you are asking me to search.

- lfind– I can use linear search to search for a key in an array of any type! To do that, I need you to provide me a function that can compare two elements of the kind you are asking me to search.

- lsearch- I can use linear search to search for a key in an array of any type! I will also add the key for you if I can’t find it. In order to do that, I need you to provide me a function that can compare two elements of the kind you are asking me to search.

- scandir– I can create a directory listing with any order and contents! To do that, I need you to provide me a function that tells me whether or not you want me to include a given directory entry in the listing. I also need you to provide me a function that tells me the correct ordering of two given directory entries.

Floating Point

- Fixed Point Representation: A method to represent real numbers by adding binary decimal places. It has limitations in the range of numbers it can represent.

- Floating Point Representation: A more flexible method to represent a wider range of real numbers, similar to scientific notation.

- IEEE Floating Point Standard: The most common standard for representing floating-point numbers.

- Floating Point Imprecision: Floating point numbers may introduce approximation and rounding errors.

- Floating Point Arithmetic: Arithmetic operations on floating point numbers can have unexpected behavior due to precision limits (e.g., non-associativity).

#include <stdio.h>

int main() {

float a = 0.1;

float b = 0.2;

if (a + b == 0.3) {

printf("Equal\n");

} else {

printf("Not Equal\n"); // This will print

}

printf("%.20f\n", a + b); // Output: 0.30000000000000004

return 0;

}

Conceptual - actual IEEE representation is more complex

Sign | Exponent | Mantissa

1 bit | 8 bits | 23 bits

Floating Point in C

- C Guarantees Two Levels– float single precision– double double precision

- Conversions/Casting– Casting between int, float, and double changes bit representation– double/float → int

- Truncates fractional part

- Like rounding toward zero

- Not defined when out of range or NaN: Generally sets to TMin

- int → double

- Exact conversion, as long as int has ≤ 53 bit word size

- int → float

- Will round according to rounding mode

Reading

What Every Computer Scientist Should Know About Floating-Point Arithmetic

IEEE-754 Floating Point Converter

About numbers, and how to get subtraction wrong

Fast Inverse Square Root — A Quake III Algorithm

Introduction to Assembly

Overview

Goals:

- Understand what assembly is and why it matters.

- Learn about the x86-64 architecture and its syntax.

- Introduce

movinstructions and how data is moved at the assembly level.

1. What is Assembly?

Definition:

Assembly is a human-readable representation of machine code.

- Compilers like GCC convert C code into assembly.

- Each line of C may generate multiple assembly instructions.

- Assembly is machine-specific (e.g., x86-64, ARM, MIPS).

C → Assembly Example:

int sum = x + y;

Assembly Abstraction:

1) Copy x into register R1

2) Copy y into register R2

3) Add R2 to R1

4) Store result from R1 to sum

2. Registers

Definition:

Registers are 64-bit storage locations inside the CPU used for fast temporary storage and operations.

Common Registers:

%rax, %rbx, %rcx, %rdx,

%rsi, %rdi, %rbp, %rsp,

%r8 to %r15

Usage:

- Store intermediate values

- Pass function arguments

- Hold return values

3. Viewing Assembly

Use objdump -d <program> to inspect the assembly code of a compiled program.

4. Our First Assembly: sum_array

C Code:

int sum_array(int arr[], int nelems) {

int sum = 0;

for (int i = 0; i < nelems; i++) {

sum += arr[i];

}

return sum;

}

Disassembled Assembly:

4005b6: ba 00 00 00 00 mov $0x0,%edx ; i = 0

4005bb: b8 00 00 00 00 mov $0x0,%eax ; sum = 0

4005c0: eb 09 jmp 4005cb ; jump to condition

4005c2: 48 63 ca movslq %edx,%rcx ; rcx = (long) edx

4005c5: 03 04 8f add (%rdi,%rcx,4),%eax ; sum += arr[i]

4005c8: 83 c2 01 add $0x1,%edx ; i++

4005cb: 39 f2 cmp %esi,%edx ; compare i < nelems

4005cd: 7c f3 jl 4005c2 ; loop

4005cf: f3 c3 repz retq ; return

Key:

%rdi:arr%esi:nelems%eax: return value / sum%edx: loop index

5. Assembly Instruction Format

Each instruction has:

- Opcode (operation): e.g.,

mov,add,cmp - Operands (data): e.g., registers, memory, constants

6. mov Instruction

mov src, dst

- Moves bytes from

srctodst - Only one memory operand allowed

7. Operand Types

Immediate:

mov $0x42, %rax

Moves constant value 0x42 into %rax.

Register:

mov %rbx, %rax

Copies contents of %rbx into %rax.

Absolute Address:

mov 0x104, %rax

Copies value at memory address 0x104 into %rax.

Indirect:

mov (%rbx), %rax

Copies value at the memory address stored in %rbx into %rax.

Base + Displacement:

mov 0x10(%rax), %rbx

Loads from memory address 0x10 + %rax.

Indexed:

mov (%rax,%rdx), %rcx

Loads from address (%rax + %rdx).

Indexed + Displacement:

mov 0x10(%rax,%rdx), %rcx

Loads from 0x10 + %rax + %rdx.

Scaled Indexed:

mov (,%rdx,4), %rax

Loads from 4 * %rdx.

mov 0x4(,%rdx,4), %rax

Loads from 0x4 + 4 * %rdx.

Scaled Indexed with Base:

mov (%rax,%rdx,2), %rcx

Loads from %rax + 2 * %rdx.

mov 0x4(%rax,%rdx,2), %rcx

Loads from 0x4 + %rax + 2 * %rdx.

General Address Form:

Imm(%rb, %ri, scale)

=> Address = Imm + R[%rb] + R[%ri] * scale

8. Practice Questions from Slides

Example Setup:

Assume:

- Memory at

0x42=5 %rbx=8%rax=0x100%rdx=3- Memory at

0x10C=0x11

Examples:

mov $0x42, %rax ; rax = 0x42

mov 0x42, %rax ; rax = 5 (from memory)

mov %rbx, 0x55 ; memory[0x55] = 8

mov 4(%rax), %rcx ; rcx = memory[0x104] = 0xAB

mov 9(%rax,%rdx), %rcx ; rcx = memory[0x100 + 3 + 9] = ?

9. Summary

Key Points:

- GCC turns C code into x86-64 assembly.

- Assembly uses registers and memory addresses directly.

movis the fundamental instruction to move data.- Assembly instructions work on bytes, not types.

- Understanding operand forms is critical for reading assembly.

readings

An Introduction to 64-bit Computing and x86-64

The story of Mel (Annotated version)

Arithmetic, Logic, and Condition Codes

1. Recap: What is Assembly?

- Assembly is a low-level, human-readable language that closely reflects the binary machine code executed by the CPU.

- Operates on registers—64-bit “scratchpad” storage inside the processor.

- Assembly instructions correspond to operations on data inside registers or memory.

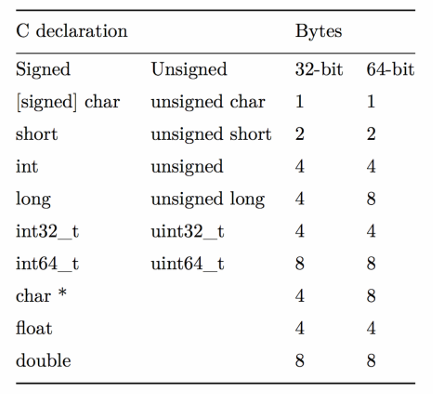

2. Data Sizes and Register Sizes

Terminology:

| Size Name | Bytes | Suffix |

|---|---|---|

| Byte | 1 | b |

| Word | 2 | w |

| Double | 4 | l |

| Quad | 8 | q |

Register Naming by Size (example with %rax):

| Size | Register |

|---|---|

| 64-bit | %rax |

| 32-bit | %eax |

| 16-bit | %ax |

| 8-bit | %al |

3. Register Responsibilities

%rax– Return value%rdi– First argument%rsi– Second argument%rdx– Third argument%rsp– Stack pointer (top of stack)%rip– Instruction pointer

4. mov Variants and Data Transfers

Suffixes:

movb,movw,movl,movq

Special Instructions:

movabsq $0x1122334455667788, %rax

- Use

movabsqto load a full 64-bit immediate constant into a register.

5. Zero and Sign Extension

movz (zero-extend)

| Instruction | Effect |

|---|---|

movzbw | byte → word |

movzbl | byte → double |

movzwq | word → quad |

movs (sign-extend)

| Instruction | Effect |

|---|---|

movsbw | byte → word |

movsbl | byte → double |

movslq | double → quad |

cltq ; Sign-extend %eax into %rax

6. lea: Load Effective Address

lea 6(%rax), %rdx

- Not a memory access — computes the address expression and places the result into the destination.

- Useful for address arithmetic.

lea vs mov Comparison:

| Expression | mov Meaning | lea Meaning |

|---|---|---|

6(%rax), %rdx | Read from memory at address | Compute 6 + %rax |

(%rax,%rcx),%rdx | Read from address %rax + %rcx | Compute %rax + %rcx |

(%rax,%rcx,4) | Read from %rax + 4 * %rcx | Compute %rax + 4 * %rcx |

7(%rax,%rax,8) | Read from 7 + %rax + 8 * %rax | Compute 7 + %rax + 8 * %rax |

7. Unary Arithmetic Instructions

incq 16(%rax)

dec %rdx

neg %rax

not %rcx

| Instruction | Description |

|---|---|

inc | Increment |

dec | Decrement |

neg | Negate (2’s complement) |

not | Bitwise NOT |

8. Binary Arithmetic and Logic

addq %rcx, (%rax)

xorq $16, (%rax, %rdx, 8)

subq %rdx, 8(%rax)

| Instruction | Effect |

|---|---|

add | D ← D + S |

sub | D ← D - S |

imul | D ← D * S |

xor | D ← D ^ S |

or | `D ← D |

and | D ← D & S |

9. Multiplication and Division

64-bit x 64-bit = 128-bit product:

- Result split across

%rdx:%rax.

imulq %rbx ; Signed multiply: %rax × %rbx → %rdx:%rax

mulq %rbx ; Unsigned multiply

10. Division and Remainder

Usage:

%rdx:%raxis the dividend- Operand is the divisor

%raxgets the quotient%rdxgets the remainder

cqto ; Sign-extend %rax into %rdx

idivq %rdi ; Signed divide

divq %rdi ; Unsigned divide

11. Bit Shifts

shll $3, (%rax) ; logical left shift

shr %cl, (%rax,%rdx,8) ; logical right shift

sar $4, 8(%rax) ; arithmetic right shift

| Instruction | Meaning |

|---|---|

sal / shl | Left shift |

shr | Logical right shift (zero-fill) |

sar | Arithmetic right shift (sign-extend) |

- Shift amount can be an immediate or stored in

%cl.

Note on %cl as Shift Amount:

%cl = 0xFFshlbuses only the lowest 3 bits → shift by 7shlwuses 4 bits → shift by 15- Higher-width shifts use more bits.

12. Code Examples from Lecture

C Code:

int add_to_first(int x, int arr[]) {

int sum = x;

sum += arr[0];

return sum;

}

Division Example:

long full_divide(long x, long y, long *remainder_ptr) {

long quotient = x / y;

long remainder = x % y;

*remainder_ptr = remainder;

return quotient;

}

Summary

This lecture focuses on control flow in assembly language. It builds upon previous knowledge of data movement, arithmetic, and logical operations to explain how assembly handles conditional execution (if/else) and loops (while, for). Key to this is the concept of “condition codes,” which are special registers that store the results of operations and are used by conditional jump instructions. The lecture also covers specific assembly instructions related to comparisons, setting bytes based on conditions, conditional moves, and jumps.

1. Recap: Arithmetic and Logic

- Some registers have special roles:

%rax: return value%rdi,%rsi,%rdx: function parameters 1-3%rip: next instruction address%rsp: stack top address

movinstruction variants (movb,movw,movl,movq) move data of different sizes.movlto a register also zeroes out the upper 4 bytes.leainstruction copies the address of the source, not the data at the address.nop(no-op) instruction does nothing; used for alignment.mov %ebx, %ebxzeroes out the top 32 bits of %rbx.xor %ebx, %ebxalso sets %ebx to zero (and may be more efficient).

2. Control Flow

- C uses

if,else,while,forfor control flow. - Assembly uses condition codes and conditional jumps to achieve this.

3. Condition Codes

- CPU has single-bit condition code registers.

- Common condition codes:

- CF (Carry Flag): Carry-out from most significant bit (unsigned overflow).

- ZF (Zero Flag): Result is zero.

- SF (Sign Flag): Result is negative.

- OF (Overflow Flag): Two’s-complement overflow.

- Arithmetic/logic instructions update condition codes.

leadoes not. Logical operations set CF and OF to 0. Shift operations set CF to the last bit shifted out and OF to 0.incanddecaffect OF and ZF but not CF. cmpandtestinstructions only set condition codes (don’t store results).cmpis like subtraction,testis like AND.CMP S1, S2: CalculatesS2 - S1and sets flags.TEST S1, S2: CalculatesS2 & S1and sets flags.

4. Assembly Instructions for Control Flow

- Setting Bytes Conditionally:

setinstructions set a byte to 0 or 1 based on condition codes.sete D/setz D: Set if equal / zero (ZF = 1)setne D/setnz D: Set if not equal / not zero (ZF = 0)sets D: Set if negative (SF = 1)setns D: Set if non-negative (SF = 0)setg D/setnle D: Set if greater (signed >) (SF = 0 and SF = OF)- (and others for >=, <, <=, unsigned >, >=, <, <=)

- Conditionally Moving Data:

cmovinstructions conditionally move data.cmove S, R/cmovz S, R: Move if equal / zero (ZF = 1)cmovne S, R/cmovnz S, R: Move if not equal / not zero (ZF = 0)cmovs S, R: Move if negative (SF = 1)- (and others corresponding to

setconditions)

- Jump Instructions:

jmpjumps to another instruction.jmp Label: Direct jump (target is in the instruction)jmp 404f8 <loop+0xb>

jmp *Operand: Indirect jump (target address is in a register/memory)jmp *%rax

- Conditional jumps (

je,jne,js,jns,jg, etc.) jump only if a condition is true.je Label/jz Label: Jump if equal / zero (ZF = 1)jne Label/jnz Label: Jump if not equal / not zero (ZF = 0)- (and others corresponding to

setconditions)

5. Implementing Control Flow

- If/Else:

- Assembly: Calculate condition, jump past

ifbody if false, jump pastelseafterifbody. - Example C:

if (num > 3) { x = 10; } else { x = 7; } num++; - Assembly structure:

; Test (num > 3) ; Jump past if-body if test fails ; If-body (x = 10) ; Jump past else-body ; Else-body (x = 7) ; Past else body ; num++;

- Assembly: Calculate condition, jump past

- While Loop:

- Assembly: Jump to the test, execute the body, test again, jump back to the body if the test succeeds.

- Example C:

void loop() { int i = 0; while (i < 100) { i++; } } - Assembly:

0x0000000000400570 <+0>: mov $0x0,%eax ; i = 0 0x0000000000400575 <+5>: jmp 0x40057a <loop+10> ; Jump to test 0x0000000000400577 <+7>: add $0x1,%eax ; i++ 0x000000000040057a <+10>: cmp $0x63,%eax ; i < 99? 0x000000000040057d <+13>: jle 0x400577 <loop+7> ; Jump if i <= 99 0x000000000040057f <+15>: repz retq

- For Loop:

- Assembly: Similar to

while, but initialization and update are also included. Compiler optimizations can change the exact structure. - C Equivalent While Loop

for (init; test; update) { body } init; while (test) { body update } - For Loop Assembly

Init Jump to test Body Update Test Jump to body if success - GCC For Loop Output

Initialization Test Jump past loop if fails Body Update Jump to test

- Assembly: Similar to

6. Optimizations

- Conditional moves can avoid jumps, which can be slow due to branch prediction.

Summary

Lecture 14 delves into how function calls are implemented in assembly language. It explains the crucial role of the stack in managing function calls, including parameter passing, local variable storage, and return addresses. The lecture also covers the mechanics of the %rip register (instruction pointer) and how it’s affected by function calls and returns. Key instructions like push, pop, callq, and retq are discussed in detail. The concept of register saving and restoring is introduced to ensure proper function execution.

1. Recap: Control Flow

- C control flow statements (if/else, while, for) are implemented in assembly using condition codes and conditional jumps.

- Condition codes (CF, ZF, SF, OF) store results of arithmetic/logic operations.

setinstructions, conditionalmovinstructions, and conditionaljmpinstructions use condition codes.jmpinstruction:jmp Label: Direct jump.jmp *Operand: Indirect jump.

2. The Instruction Pointer (%rip)

%ripregister stores the address of the currently executing instruction.- Instructions are just bytes in memory.

- Hardware increments

%ripto the next instruction. jmpinstructions modify%ripto change the flow of execution.- Relative jumps use offsets (e.g.,

jmp 0x40057a).

3. Calling Functions

- Calling a function involves:

- Passing control to the callee and resuming caller execution later.

- Passing data (parameters, return values).

- Managing memory (stack).

4. The Stack

%rspregister stores the address of the top of the stack.- Stack grows downwards in memory.

- Stack frames are used to store function data.

%rspmust be the same before and after a function call.push S:- Decrements

%rspby 8. - Stores the value of S at the new top of the stack.

- Decrements

pushq Sis equivalent tosubq $8, %rsp; movq S, (%rsp).pop D:- Reads the value from the top of the stack.

- Stores it in D.

- Increments

%rspby 8.

popq Dis equivalent tomovq (%rsp), D; addq $8, %rsp.

5. Passing Control

callq Label:- Pushes the return address (address of the next instruction in the caller) onto the stack.

- Jumps to the callee’s code (modifies

%rip).

retq:- Pops the return address from the stack.

- Jumps to the return address (modifies

%rip).

6. Passing Data

- Registers are used to pass parameters.

%rdi: 1st parameter%rsi: 2nd parameter%rdx: 3rd parameter%rcx: 4th parameter%r8: 5th parameter%r9: 6th parameter%raxis used to return the return value.

7. Local Storage

- The stack is used for local variables that don’t fit in registers.

- Callees can push data onto the stack.

8. Register Restrictions

- Callee-saved registers:

%rbp,%rbx,%r12-%r15Callees must preserve their original values. - If a callee modifies a callee-saved register, it must:

- Push the original value onto the stack before modifying it.

- Pop the original value from the stack before returning.

- Caller-saved registers: All other registers. The caller must save these if it wants to preserve their values across function calls.

Summary

Lecture 15 covers heap allocators, explaining their role in managing dynamic memory allocation via malloc, realloc, and free. It discusses the requirements and goals of heap allocators, including handling allocation/free sequences, tracking memory usage, and optimizing for throughput and memory utilization. The lecture then details three heap allocator implementation methods: Bump Allocator (focused on speed), Implicit Free List Allocator (using headers to track free/allocated blocks), and Explicit Free List Allocator (more efficient free block management). Finally, it briefly touches on compiler optimizations.

1. The Heap and Heap Allocators

- The heap is a memory area that grows upwards, used for dynamic memory allocation.

- Heap memory persists until explicitly freed, unlike stack memory.

- A heap allocator manages heap memory by fulfilling allocation requests.

- It receives a large memory block and divides it into smaller blocks for allocation.

- Key functions:

malloc(size_t size): Allocates a block of at leastsizebytes.free(void *ptr): Frees the allocated block atptr.realloc(void *ptr, size_t size): Resizes the allocated block atptr.

2. Heap Allocator Requirements

- Handle any sequence of allocate and free requests.

- Track allocated and free memory regions.

- Choose which free memory to use for allocation.

- Respond to allocation requests immediately.

- Return 8-byte aligned memory addresses.

3. Heap Allocator Goals

- Maximize throughput (requests served per unit time).

- Maximize memory utilization (efficient use of heap memory).

- Fragmentation reduces utilization (unused memory that can’t fulfill requests).

- Relocating allocated blocks is not allowed as it invalidates pointers.

- Throughput and utilization can be conflicting goals.

4. Bump Allocator

- A simple allocator that allocates the next available memory.

- Free operations are no-ops.

- High throughput, very poor utilization (no memory reuse).

- Example:

malloc(4)allocates 4 bytes.free()does nothing.

- Illustrates the throughput vs. utilization trade-off.

5. Implicit Free List Allocator

- Tracks allocated/free blocks using headers before each block.

- Header stores block size and allocation status.

mallocsearches for a free block and updates the header.freeupdates the block’s header to mark it as free.- Improves utilization over Bump Allocator by reusing freed memory.

6. Explicit Free List Allocator

- Uses a separate data structure (e.g., linked list) to track free blocks, improving efficiency of free block management.

7. Optimization

- (Briefly mentioned)

- Compiler optimizations can improve code efficiency.

Optimization

This lecture, CS107 Lecture 16, focuses on program optimization, aiming to help students understand how to improve code efficiency and speed and learn about the optimizations that the GCC compiler can perform.

What is Optimization?

Optimization is the process of making a program faster or more efficient in terms of time and space. While understanding Big-O notation provides a foundation for efficiency, targeted optimizations can lead to significant performance gains. However, it’s important to focus optimization efforts where they are most needed.

A general approach to optimization is summarized as follows:

- If doing something seldom and only on small inputs, do whatever is simplest to code, understand, and debug.

- If doing things thing a lot, or on big inputs, make the primary algorithm’s Big-O cost reasonable.

- Let gcc do its magic from there.

- Optimize explicitly as a last resort.

GCC Optimization

The GCC compiler can perform various optimizations at different levels. The lecture compares two levels:

gcc -O0: Primarily a literal translation of C code.gcc -O2: Enables nearly all reasonable optimizations.

Other, more aggressive or specialized optimization levels exist, such as -O3 (more aggressive, potentially trades size for speed), -Os (optimizes for size), and -Ofast (disregards standards compliance for maximum speed). A comprehensive list of GCC optimization flags is available in the GCC documentation.

Optimizations performed by GCC can target one or more of the following:

- Static instruction count

- Dynamic instruction count

- Cycle count / execution time

Here are some specific GCC optimizations discussed:

Constant Folding

Constant folding is the process where the compiler pre-calculates constant expressions at compile-time.

Example C code:

int seconds = 60 * 60 * 24 * n_days;

The constant expression 60 * 60 * 24 can be calculated by the compiler, so the compiled code will directly use the result (86400) instead of performing the multiplications at runtime.

Example with a more complex expression:

int fold(int param) {

char arr[5];

int a = 0x107;

int b = a * sizeof(arr);

int c = sqrt(2.0);

return a * param + (a + 0x15/c + strlen("Hello") * b - 0x37) / 4;

}

Before optimization (-O0), the assembly shows multiple instructions for calculating the expression:

0000000000400626 <fold>:

400626: 55 push %rbp

400627: 53 push %rbx

400628: 48 83 ec 08 sub $0x8,%rsp

40062c: 89 fd mov %edi,%ebp

40062e: f2 0f 10 05 da 00 00 movsd 0xda(%rip),%xmm0 # 400710 <_IO_stdin_used+0x10>

400635: 00

400636: e8 d5 fe ff ff callq 400510 <sqrt@plt>

40063b: f2 0f 2c c8 cvttsd2si %xmm0,%ecx

40063f: 69 ed 07 01 00 00 imul $0x107,%ebp,%ebp

400645: b8 15 00 00 00 mov $0x15,%eax

40064a: 99 cltd

40064b: f7 f9 idiv %ecx

40064d: 8d 98 07 01 00 00 lea 0x107(%rax),%ebx

400653: bf 04 07 40 00 mov $0x400704,%edi # "Hello"

400658: e8 93 fe ff ff callq 4004f0 <strlen@plt>

40065d: 48 69 c0 23 05 00 00 imul $0x523,%rax,%rax

400664: 48 63 db movslq %ebx,%rbx

400667: 48 8d 44 18 c9 lea -0x37(%rax,%rbx,1),%rax

40066c: 48 c1 e8 02 shr $0x2,%rax

400670: 01 e8 add %ebp,%eax

400672: 48 83 c4 08 add $0x8,%rsp

400676: 5b pop %rbx

400677: 5d pop %rbp

400678: c3 retq

After optimization (-O2), the assembly is significantly shorter, indicating many parts of the expression were folded into constants:

00000000004004f0 <fold>:

4004f0: 69 c7 07 01 00 00 imul $0x107,%edi,%eax

4004f6: 05 a5 06 00 00 add $0x6a5,%eax

4004fb: c3 retq

4004fc: 0f 1f 40 00 nopl 0x0(%rax)

Common Sub-expression Elimination

This optimization prevents recalculating the same expression multiple times by computing it once and storing the result.

Example C code:

int a = (param2 + 0x107);

int b = param1 * (param2 + 0x107) + a;

return a * (param2 + 0x107) + b * (param2 + 0x107);

The expression (param2 + 0x107) is a common sub-expression. GCC will calculate it once and reuse the result. The assembly shows the optimization taking place. This optimization can even occur at -O0.

00000000004004f0 <subexp>:

4004f0: 81 c6 07 01 00 00 add $0x107,%esi

4004f6: 0f af fe imul %esi,%edi

4004f9: 8d 04 77 lea (%rdi,%rsi,2),%eax

4004fc: 0f af c6 imul %esi,%eax

4004ff: c3 retq

Dead Code Elimination

Dead code elimination removes code that has no effect on the program’s output.

Examples of dead code:

- An

ifstatement with a condition that is always false:if (param1 < param2 && param1 > param2) - An empty

forloop:for (int i = 0; i < 1000; i++); if/elsestatements where both branches perform the same operation.if/elsestatements where theelsebranch is effectively unreachable or redundant.

Before optimization (-O0), the assembly includes instructions for the dead code:

00000000004004d6 <dead_code>:

4004d6: b8 00 00 00 00 mov $0x0,%eax

4004db: eb 03 jmp 4004e0 <dead_code+0xa>

4004dd: 83 c0 01 add $0x1,%eax

4004e0: 3d e7 03 00 00 cmp $0x3e7,%eax

4004e5: 7e f6 jle 4004dd <dead_code+0x7>

4004e7: 39 f7 cmp %esi,%edi

4004e9: 75 05 jne 4004f0 <dead_code+0x1a>

4004eb: 8d 47 01 lea 0x1(%rdi),%eax

4004ee: eb 03 jmp 4004f3 <dead_code+0x1d>

4004f0: 8d 47 01 lea 0x1(%rdi),%eax

4004f3: f3 c3 repz retq

After optimization (-O2), the dead code is removed in the assembly:

00000000004004f0 <dead_code>:

4004f0: 8d 47 01 lea 0x1(%rdi),%eax

4004f3: c3 retq

4004f4: 66 2e 0f 1f 84 00 00 nopw %cs:0x0(%rax,%rax,1)

4004fb: 00 00 00

4004fe: 66 90 xchg %ax,%ax

Strength Reduction

Strength reduction replaces computationally expensive operations with equivalent, less expensive ones. Examples include changing division to multiplication (by the reciprocal), or multiplication and modulo operations to bit shifts and bitwise AND when dealing with powers of two.

Example C code:

int a = param2 * 32; // multiplication can be replaced by left shift

int b = a * 7;

int c = b / 3;

int d = param2 % 2; // modulo by 2 can be replaced by bitwise AND with 1

for (int i = 0; i <= param2; i++) {

c += param1[i] + 0x107 * i; // multiplication in loop might be optimized

}

return c + d;

Code Motion

Code motion moves computations out of loops if their results do not change during the loop iterations.

Example C code:

for (int i = 0; i < n; i++) {

sum += arr[i] + foo * (bar + 3);

}

The expression foo * (bar + 3) can be calculated once before the loop starts, as its value does not depend on the loop variable i.

Tail Recursion

GCC can sometimes optimize tail-recursive functions by converting them into iterative loops, avoiding the overhead of recursive function calls.

Example C code:

long factorial(int n) {

if (n <= 1) return 1;

else return n * factorial(n - 1); // This is not tail recursion

}

A tail-recursive version would have the recursive call as the very last operation in the function.

Loop Unrolling

Loop unrolling reduces loop overhead by performing multiple iterations of the loop body within a single iteration of the compiled loop.

Example C code:

for (int i = 0; i <= n - 4; i += 4) {

sum += arr[i];

sum += arr[i + 1];

sum += arr[i + 2];

sum += arr[i + 3];

} // after the loop handle any leftovers

This manually unrolled loop processes 4 elements in each iteration, reducing the number of loop condition checks and jumps. Compilers can often perform this optimization automatically.

Limitations of GCC Optimization

While powerful, GCC cannot optimize everything because it doesn’t have the same level of understanding of the code’s intent as the programmer.

Example:

int char_sum(char *s) {

int sum = 0;

for (size_t i = 0; i < strlen(s); i++) { // Bottleneck: strlen called in each iteration

sum += s[i];

}

return sum;

}

The bottleneck here is calling strlen(s) in every loop iteration. A programmer knows that the length of the string s does not change within the loop, and can manually optimize this by calculating the length once before the loop. While GCC might apply code motion to move strlen out of the loop in some cases, it cannot always guarantee this due to potential side effects or aliasing.

Another example:

void lower1(char *s) {

for (size_t i = 0; i < strlen(s); i++) { // Bottleneck: strlen called in each iteration

if (s[i] >= 'A' && s[i] <= 'Z') {

s[i] -= ('A' - 'a');

}

}

}

Again, strlen(s) is called repeatedly. GCC cannot move strlen out of this loop because the string s is being modified within the loop. Although the modification doesn’t change the string’s length, GCC cannot assume this. A programmer, knowing this, can manually optimize by calculating the length once before the loop.

Caching

Program performance is not solely limited by processor speed; memory access can be a significant bottleneck. Computer systems use a hierarchy of memory levels, ranging from very fast (registers) to very slow (disk). Data that is used more frequently is moved to faster memory levels.

Caching relies on locality:

- Temporal locality: Data that has been accessed recently is likely to be accessed again soon (co-located in time).

- Spatial locality: Data located near recently accessed data is also likely to be accessed (co-located in space).

A high cache hit rate is crucial for performance. Even a small percentage of cache misses can significantly impact the total memory access time.

Resources

Here are useful materials and references about the tools and topics covered in CS107.

The Emacs Editor

Click here for a walkthrough video.

Click here for an Emacs reference card.

emacs is the command-line text editor used in CS107 to work on projects. It is a text editor that was modified by Richard Stallman (also see Richard Stallman on Wikipedia, who has an interesting outlook on life. This guide can get you up and running with emacs!

Getting Started

Before you start using emacs, you’ll want to configure it to use the CS107 default preferences; this sets up the editor to do things like use mouse controls, display line numbers, standardize indentation, etc. To do this, execute the following command after logging into myth:

wget https://cs107.stanford.edu/resources/sample_emacs -O ~/.emacs

emacs looks for a special .emacs file on your system to know what preferences you would like, and this command downloads our pre-made .emacs file and puts it on your system. If you don’t do this, you won’t be able to use some of the shortcuts and commands highlighted in lecture and the guides.

Bonus: Custom Themes

If you’re interested, you can further customize the default color theme. If you don’t like the theme chosen in our configuration, open any file in emacs, and then type M-x customize-themes (M means the “Meta” key, which is discussed later on). This will take you to a page where you can choose your own theme. Once there, pick a theme by moving your cursor onto a theme and hitting ENTER. Finally, move your cursor to Save Theme Settings and hit ENTER to save. Open a new file in emacs to see what the theme looks like.

Overview

Emacs works similarly to other editors you might have used; it lets you enter and edit text, and has certain keyboard shortcuts to perform common commands. The two keys it uses for these shortcuts are Control and Meta (which is Alt, or Option on a Mac).

Before continuing: if you’re using a Mac, or a Windows computer with SecureCRT, make sure you have followed the instructions to configure your Meta key, as described in the logging-in guide. This will ensure that your Meta key is properly set to be Option (Mac) or Alt (Windows). Otherwise, some of the keyboard shortcuts below will not work!

Opening emacs

To open a file in emacs (or create a new one if a file with this name does not exist):

$ emacs filename

If emacs is already open and you would like to edit a different file, use the Ctl-x Ctl-f command. Again, if this file exists in your current directory it will open it, or it will create it if it does not already exist in your current directory.

You can open multiple files in emacs side-by-side (e.g. to copy-paste between them) by specifying multiple filenames when you open emacs:

emacs file1.c file2.c

Saving and Quitting emacs

Ctl-x Ctl-s save the current changes to a file. Ctl-x Ctl-w lets you save the file under a different name (like “Save As” in graphical editors).

Ctl-x Ctl-c quits Emacs. If you have unsaved changes, you will be asked whether or not you’d like to save your changes before quitting.

Navigating A File

You can use the mouse to navigate a file as you might expect in other graphical text editors. Specifically, you can click to position the cursor, and use the mouse to scroll through the file. You can also use the arrow keys to navigate the file if you would prefer.

There are also several additional keyboard shortcuts to navigate within a file:

Meta-f or Meta-right arrow move to the next word

Meta-b or Meta-back arrow move to the previous word

Ctl-a or Meta-a move to the beginning of the line

Ctl-e or Meta-e move to the end of the line

Meta-g g [NUMBER] jump to a line number. For example, typing Meta-g g 123 would jump to line 123.

Ctl-v page down

Meta-v page up

Meta-< (less-than sign) jump to the start of the file

Meta-> (greater-than sign) jump to the end of the file

Searching

Search is another great way to move your cursor.

Ctl-s searches the file, and prompts you to enter text to search for.

Ctl-s move the cursor to the next search match

Ctl-r move the cursor to the previous search match

Meta-% find and replace text ahead of the current cursor position

Editing Text

You can type as normal to insert or delete text wherever the cursor is in the document. There are also additional shortcuts below for cut/copy/paste and selecting/deleting text:

Click and drag with the mouse to highlight text. (Note: the highlighting text feature will not highlight the text you have chosen until after you have released the mouse).

Ctl-SPACE to put a marker down, and move the cursor to select text

Ctl-w to cut (“kill”) the current selection Meta-w to copy the current selection Ctl-y to paste (“yank”) whatever is in the copy-paste buffer

Ctl-k cut (“kill”) the text on the current line to the right of the cursor

If you hit Ctl-k multiple times in a row (with no other commands between), all the lines will be passed when you hit Ctl-y.

Ctl-d to delete the character under the cursor. Backspace to delete the character before the cursor.

Undo/Redo

Ctl-x u undo the last action

Ctl-g cancel (if you’re stuck in a command or prompt, pressing this, sometimes several times, should get you out)

More Resources

The best way to get familiar with emacs is to just start using it to edit files - type something, anything! Over time, you’ll become more comfortable with the standard commands, and pick up more advanced ones. If you are looking for more references for how to use emacs, check out the following additional resources:

Ctl-his a command withinemacsthat opens the help menu where you can search for help for different commands.- Emacs Commands Reference Card

- Section 4 of this Stanford Unix Programming Tools document.

- Stanford Farmshare guide to emacs

Frequently Asked Questions

Do I have to use emacs? Can I use another text editor?

While there are a variety of text editors available, both command-line editors and GUI editors, emacs is the editor we choose to work on projects in CS107. It works similarly to other text editors you might have already used, and because you are always editing your files on the myth machines via ssh, it means that there is less risk of you losing your work. It is also easy to learn other editors that you may be interested in once you learn emacs.

While we won’t prevent you from using a different editor if you do not want to use emacs, note that we only officially support using emacs in CS107 and can’t answer questions about other editors or configurations. In particular, we do not recommend using a local editor such as Sublime Text to edit remote files. This is because of risks associated with missed updates, weird synchronization issues, dangerous data loss, and more, which we have seen cause students to lose work. Additionally, learning a Unix-based editor is an essential skill, as a local editor setup may not always be available or appropriate.

Compiling Programs with Make

Click here for a walkthrough video.

In CS107, we will use a program called make to compile our programs. Make is a program that dates back to 1976, and it is used to build projects with dependencies such that it only recompiles files that have been changed, and avoids having to type lengthy compile commands. It is a single file that contains all the files and settings to compile a project and link it with the appropriate libraries.

For simple projects with uncomplicated settings, you can build without a makefile by directly invoking a compiler like GCC, e.g. gcc file1.c file2.c file3.c compiles three files and links them together into an executable named a.out. You could add flags such as -Wall (for warnings) or -std=gnu99 (to use the updated GNU99 specification), or -o [name] to set the name of the resulting executable. However, manually re-typing these compilation commands quickly becomes tedious as projects get even slightly complex, and it is easy to mistype or be inconsistent. Managing the build with a makefile is much more convenient and less error-prone.

For our purposes, you will not need to know too much about Make, except how to use it. All CS107 projects will be distributed with a pre-written Makefile which you can usually use as-is. What is very important, however, is that you need to remember to run make after any change to the source code of your programs – many students forget to run make and wonder why they get unexpected results from their programs, when it is simply that they never re-compiled their code after the changes!

The simplest way to use make is by typing make in a directory that contains a “Makefile” called, fittingly, Makefile:

$ make

gcc -g -Og -std=gnu99 -o hello helloWorld.c helloLanguages.c

$ ./hello

Hello World

Hallo Welt

Bonjour monde

Here is an example Makefile for the program above with the following files: hello.h, helloLanguages.c, helloWorld.c:

#

# A very simple makefile

#

# The default C compiler

CC = gcc

# The CFLAGS variable sets compile flags for gcc:

# -g compile with debug information

# -Wall give verbose compiler warnings

# -O0 do not optimize generated code

# -std=gnu99 use the GNU99 standard language definition

CFLAGS = -g -Wall -O0 -std=gnu99

hello: helloWorld.c helloLanguages.c hello.h

$(CC) $(CFLAGS) -o hello helloWorld.c helloLanguages.c

.PHONY: clean

clean:

rm -f hello *.o

Note that lines beginning with ‘#’ are comments, and are ignored when the makefile is processed.

The Makefile has rules that are followed to decide when to compile a program. In particular, the hello: line in the Makefile tells Make to re-compile the program if any of the three files (hello.c, helloLanguages.c, and hello.h) have changed. On the following line, which must begin with a tab and not spaces (if you don’t do this, you’ll get a “missing separator” error!), the compilation line runs. There are two variables in this Makefile, CC (the compiler), and CFLAGS (the flags that we are going to send to the compiler).

A commonly-included second rule, clean:, allows you to write make clean and remove all files associated with compiling that program. (The first rule listed in the Makefile is executed by default when you type make, which is why you don’t have to write make hello each time).

See how to compile with gcc for information about how the compilation happens.

Extra: More About Makefiles

While you don’t need to know much about Makefiles in order to compile your assignments, read on if you’re interested in learning more about the details behind writing a Makefile. Checking out makefiles from some real world projects is another interesting way to see make in action.

Below is a longer Makefile that might be used to build a larger project:

#

# A simple makefile for managing build of project composed of C source files.

#

# It is likely that default C compiler is already gcc, but explicitly

# set, just to be sure

CC = gcc

# The CFLAGS variable sets compile flags for gcc:

# -g compile with debug information

# -Wall give verbose compiler warnings

# -O0 do not optimize generated code

# -std=gnu99 use the GNU99 standard language definition

CFLAGS = -g -Wall -O0 -std=gnu99

# The LDFLAGS variable sets flags for linker

# -lm says to link in libm (the math library)

LDFLAGS = -lm

# In this section, you list the files that are part of the project.

# If you add/change names of source files, here is where you

# edit the Makefile.

SOURCES = demo.c vector.c map.c

OBJECTS = $(SOURCES:.c=.o)

TARGET = demo

# The first target defined in the makefile is the one

# used when make is invoked with no argument. Given the definitions

# above, this Makefile file will build the one named TARGET and

# assume that it depends on all the named OBJECTS files.

$(TARGET) : $(OBJECTS)

$(CC) $(CFLAGS) -o $@ $^ $(LDFLAGS)

# Phony means not a "real" target, it doesn't build anything

# The phony target "clean" is used to remove all compiled object files.

# 'core' is the name of the file outputted in some cases when you get a

# crash (SEGFAULT) with a "core dump"; it can contain more information about

# the crash.

.PHONY: clean

clean:

rm -f $(TARGET) $(OBJECTS) core

Let’s go through this makefile and see what’s there.

Macros

These are the substitutions defined toward the top of the makefile (lines that look like CFLAGS = -g -Wall). They are similar to #define statements in C, and should be used for any expression which is likely to be used repeatedly in a makefile. Once a macro has been assigned, we can reference it later using $(MACRO_NAME) (e.g. $(CFLAGS) in the example above). When we type make in a terminal, the file parser will simply replace these macro references with the assigned content.

Diving deeper, the line OBJECTS = $(SOURCES:.c=.o) defines the OBJECTS macro to be the same as the SOURCES macro, except that every instance of ‘.c’ is replaced with ‘.o’ - that is, this assignment is equivalent to OBJECTS = demo.o vector.o map.o. There are also two built-in macros used by the makefile, $@ and $^; these evaluate to demo and demo.o vector.o map.o, respectively, but we will need to learn a bit about targets before we find out why.

For clarity, it may be worth looking at the content of the makefile as the parser “sees” it, with comments removed and macros fully expanded. In this form, our sample makefile looks like:

demo : demo.o vector.o map.o

gcc -g -Wall -O0 -std=gnu99 -o demo demo.o vector.o map.o -lm

.PHONY: clean

clean:

rm -f demo demo.o vector.o map.o core

Targets

Following our makefile’s macro definitions, we see a number of targets. Targets and their associated actions are written in the form:

target-name : dependencies

action

The target name is generally the name of the file that will be produced when this target is built. The first target listed in a makefile is the default target, meaning that it is the target which is built when make is invoked with no arguments; other targets can be built using make [target-name] at the command line. It is also worth mentioning at this point that the Make utility recognizes a number of implicit targets, and in particular that each of our object files has an associated implicit target equivalent to:

[filename].o : [filename].c

$(CC) $(CFLAGS) -o [filename].o [filename].c

Much of the power of the Make utility comes from its handling of dependencies. The dependencies of a target are the files which need to exist and be up to date before the target itself can be built. In the example above, the demo target depends on three object files (each of which can be built with its own implicit target as specified). Make processes dependencies recursively; if particular dependency has an associated target, the Make utility will (re)build the dependency’s target before processing the parent target, ensuring that all dependencies are up to date before the parent target is processed. Thus, for our sample makefile, the command make demo actually behaves more like make demo.o ; make vector.o ; make map.o ; make demo (the recursion ends at dependencies which don’t have an associated target; this occurs if, for example, we’re depending on a source file like demo.c, as is the case with the demo.o target). The Make utility will then examine the timestamps of each file on which the parent target depends, and will build the parent target if any of these files have been changed more recently than the parent file (or if the parent file does not yet exist). In our case, this means that if the demo executable already exists in our directory, make demo will not do anything unless the directory’s object files need to be rebuilt during recursive dependency processing, which in turn will only occur if any of our source files (demo.c, vector.c, map.c) have been modified more recently than their associated object file was built. Thus if we haven’t modified any of our source files, invoking make demo repeatedly will only build the demo executable once. Furthermore, if we modify just one of our source files, we will only rebuild the associated object file, rather than all three object files. In large-scale projects, these sorts of optimizations can save hours of compilation time whenever a project is built.

Finally, each target has an associated command, which will be run in the shell in order to build the target. Generally, this is a command which invokes the compiler, but technically it can be any command which creates a file with the target’s name. When defining the command for a target, we also have access to a number of special macros, such as $@ and $^ above. We can see now that these macros evaluate, respectively, to the name of the current target and its list of dependencies. Other such target-dependent macros exist, and information on them is available in the Make documentation.

Phony targets: Note that the clean target in our sample Makefile doesn’t actually create a file named ‘clean’, and thus doesn’t fit the pattern which we’ve been describing for targets. Rather, the clean target is used as a shortcut for running a command which clears out the project’s build files (the ‘@’ at the beginning of the command tells Make not to print it to the terminal when it is being run). We flag targets like this by listing them as “dependencies” of .PHONY, which is a pseudo-target that we’ll never actually build. When the Make utility encounters a phony target, it will run the associated command automatically, without performing any dependency checks.

Even More

If you’re interested in learning even more about make, check out the following resources:

- the full make manual (from GNU)

- Section 2 of this Stanford Unix Programming Tools document

- the No-starch press “Gnu Make Book” (requires authentication, accesses Stanford’s subscription to Safari Books Online).

Compiling C Programs with GCC

Click here for a walkthrough video.

The compiler we will use for CS107 is called the “GNU Compiler Collection” (gcc). It is one of the most widely used compilers, and it is both Free Software, and available on many different computing platforms.

gcc performs the compilation step to build a program, and then it calls other programs to assemble the program and to link the program’s component parts into an executable program that you can run. We will learn a bit about each of those steps during CS107, but the nice thing is that gcc can produce the entire executable (runnable) program for you with one command.

In CS107, we will predominantly use Makefiles to compile, assemble, and link our code, but the Makefile runs gcc to do the work. This is just a quick overview on how to compile and run your own programs should you decide to do so without a Makefile.

The simplest way to run gcc is to provide gcc a list of .c files:

$ gcc hello.c

$

Note that you do not put header files (.h) into the gcc command: it reads in the header files as it compiles, based on the #include statements inside .c files.

If the program compiled without errors or warnings, you don’t get any output from gcc, and you will have a new file in your directory, called a.out. To run this file, you need to tell the shell to run the file in the current directory, by using ./ before the name:

$ ./a.out

Hello, World!

$

We generally don’t want our programs named a.out, so you can give gcc an option, -o programName, to tell it what to name the runnable file:

$ gcc hello.c -o hello

$ ./hello

Hello, World!

$

Note: be careful not to accidentally input a runnable file name that is the same as your input file - something like:

$ gcc hello.c -o hello.c

On myth, your profile has been set up to catch the error and not compile it, leaving your source file in tact. This is not the case on many other Linux systems, so be careful! On other systems, GCC would overwrite your source file with the new executable.

gcc takes many different command line options (flags) that change its behavior. One of the most common flags is the “optimization level” flag, -O (uppercase ‘o’). gcc has the ability to optimize your code for various situations.